COPYRIGHT LAWS AND AI

Intro: Creative artists and writers are voicing their anger at AI theft of their work with ‘Human Authored’ logos and an empty book. The government must listen

DURING last week’s London Book Fair, The Society of Authors stamped its books with “Human Authored” logos, in scenes that might have come from a dystopian novel. They described its labelling scheme as “an important sticking plaster to protect and promote human creativity in lieu of AI labelled content in the marketplace”.

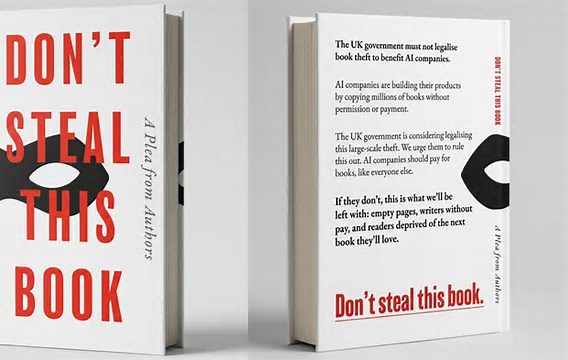

Entrants to the fair were also given copies of Don’t Steal This Book, an anthology of some 10,000 writers including Nobel laureate Kazuo Ishiguro, Malorie Blackman, Jeanette Winterson, and Richard Osman. The pages of the book are completely blank, but the back cover states: “The UK government must not legalise book theft to benefit AI companies.” The message is clear and simple: writers have had enough.

The book fair arrived before the government is due to deliver its progress report on AI and copyright, after proposals for a relaxation of existing laws caused outrage last year. Philippa Gregory, the novelist, described the plans for an “opt-out” policy, which puts the onus on writers to refuse permission for their work to be trawled, as akin to putting a sign on your front door asking burglars to pass by.

According to a University of Cambridge study last autumn, almost 60% of published authors believe their work has been used to train large language models without consent or reimbursement. And nearly 40% said their income had already fallen as a result of generative AI or machine-made novels, a digital incarnation of Orwell’s Versificator in Nineteen Eighty-Four.

Factual books are clearly most susceptible to ChatGPT and other AI generative tools. While sales in fiction are rising, sales of nonfiction were down 6% last year compared with 2024. But three nonfiction books, all by female authors, bucked the trend: Nobody’s Girl, Virginia Giuffre’s posthumous memoir of abuse; A Hymn to Life, Gisèle Pel icot’s testimony and account of her ordeal at the hands of her ex-husband; and Careless People, Sarah Wynn-Williams’s exposé of working at Facebook. The success of these first-person narrations show the powerful reach of nonfiction beyond the world of publishing. These are painfully human stories; readers must be able to trust in the authenticity of their voices.

Last year, novelist Sarah Hall requested that her publisher Faber, print a “Human Written” stamp on her latest book, Helm. “AI might mimic the words more rapidly, but . . . it hasn’t bled on the page,” she said. “And it doesn’t have a family to support.”

Writers’ livelihoods must not be sacrificed to the promise of economic growth. The UK’s creative industries contributed £124bn to the UK economy in 2023, of which £11bn came from publishing. The Society of Authors is requesting consent and fair payment for use of work, and transparency as to how a book was “written”. These are hardly radical propositions. But in an era of fake news and AI slop, they are sadly necessary. Writers and creative artists need more than sticking plasters. They need robust legislation.

A House of Lords report recently published lays out two possible futures: one in which the UK “becomes a world-leading home for responsible, legalised artificial intelligence (AI) development” and another in which it continues “to drift towards tacit acceptance of large-scale, unlicensed use of creative content”. One scenario protects UK artists, the other benefits global tech companies. To avoid a world of empty content, the choice is clear.